The dQUOB System is a compiler and run-time environment used to create computational entities called quoblets that are inserted into high-volume data streams. The data streams we speak of are the flows of data that exist in large-scale visualizations, video streaming to a large number of distributed users, and high volume business transactions. Our approach is suitable anywhere that 1.) information flows to a client from one or more sources, 2.) computations must be performed on the data before it reaches the client, and 3.) the client wants control over what arrives as the final product. The dQUOB system's unique feature is it lets a person specify application-specific queries to control the data flow, that is, queries that examine the data flow and make decisions prior to computations being performed. Through coupling queries and computations, the decision-making of a computational entity is enhanced and more broadly, the scalability of the entire data flow increased.

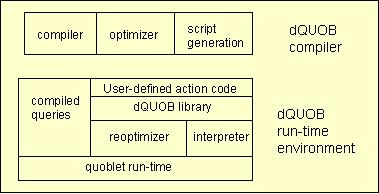

The logical architecture of a dQUOB system, shown in Fig. 1, consists of a compiler and a run-time environment. The compiler accepts queries and associated action specified in a form resembling an active database event-action rule (E-A rule). The rule is compiled and passed through the optimizer where static optimization heuristics are applied. The optimized E-A rule is transformed into a script by the compiler back-end.

The runtime environment consists of a dQUOB library for building compile-code objects from scripts, an interpreter to execute the script, a reoptimizer to apply more runtime optimizations since data behavior can only be understood at runtime, and a quoblet runtime to control it all. The remaining two pieces, the compiled queries and user-defined action code contain the user supplied E-A rules.

The dQUOB System strengths are its:

The architecture of the runtime, shown below, consists of two threads which communicate in a reader/writer fashion via a queue. The code is written in C++; and uses Tcl for the script interpretation piece. It is useful for people wanting to write their own computational data stream objects on top of the ECho event channel infrastructure. It is small, highly modular, and even has its own test harness with real application data so you can build a quoblet, stream it data, and have it forward the data on to a client - out of the box.

Be aware, because a smallish (relatively speaking - it's scientific data) data file is included, the gzipped file is 4M. Questions or suggestions? Please e-mail Beth Plale.

dQUOB: Managing Large Data Flows by Dynamic Embedded Queries,

Beth Plale and Karsten Schwan, To appear in High

Performance Distributed Computing (HPDC2000), 2000

Extended abstract available as

postscript,

pdf.

Full version available as technical report GIT-TR-00-07

postscript,

pdf.

Run-time Detection in Parallel and Distributed Systems: Application

to Safety-Critical Systems, Beth Plale and Karsten Schwan,

Proc. 19th Int'l Distributed Computing Systems (IDCDS'99), 1999,

compressed

postscript,

pdf.

Software Approach to Hazard Detection Using

On-line Analysis of Safety Constraints,

Beth (Plale) Schroeder, Sudhir Aggarwal, and Karsten Schwan,

Proc. 16th Symposium on Reliable Distributed Systems (SRDS'97), October, 1997,

compressed

postscript,

pdf.

Language Issues in Hazard Detection Using Queries, Beth Plale and Karsten Schwan,

Technical Report GIT-CC-97-36, College of Computing, Georgia Institute

of Technology, 1997,

postscript,

pdf.

Software Approach to Hazard Detection Using On-line Analysis

of Safety Constraints, Beth Plale,

Ph.D. Thesis, State University of New York at Binghamton, 1998,

compressed postscript.