Project 2: Local Feature Matching

In this assignment we create a local feature matching algorithm which is a simplified version of the SIFT pipeline.Obtaining Interest Points

We use the Harris Corner Detection algorithm for keypoint detection and localization. Basically we evaluate the cornerness score for each point in the image. We discard points for which the cornerness score is less than 0.001. We also discard points which are within the feature_width/2 pixels of the boundaries. Further we use a sliding window on each corner point to check if it is the maximum within a neighborhood ([3 3], [feature_width/2 feature_width/2]). If it is not, it is discarded. The keypoints are then sorted in decreasing order of cornerness and the best 1500 are returned.Extracting Features

We use the standard gradient orientation histogram in 8 bins as features. The feature length is 128.Feature Matching

We use the nearest neighbor distance ratio to estimate confidence of matches.Bells & Whistles:

- KD-Tree based nearest neighbor search

We use the knnsearch function to do a KDTree based nearest 2 neighbor search in features2 for every entry in features1. Thereafter we use obtained values to compute the NNDR. - Principal Components Analysis to reduce descriptor dimension

To reduce the dimensionality of the features from 128 to 32, I generate a PCA basis from the 90 or so images in the extra_data.zip provided on the project page. The combined percentage variance for 32 dimensions comes out to be about 62.38%. We also extract a global mean feature mu. I have created a separate function match_feature_pca() which first uses the computer PCA basis, W to reduce the dimensionality of the features to 32 and then perform the matching algorithm (kNN).

Tweaks & Results

| #interest points | feature_width | non-max suppression win size | PCA | % Notre Dame | % Mt. Rushmore | % Episcopal Gaudi |

| <=1500 | 32 | [8 8] | yes | 80 | 65 | 7 |

| <=1500 | 32 | [8 8] | no | 85 | 88 | 5 |

| as many avbl | 32 | [8 8] | yes | 90 | 100 | 13 |

| as many avbl | 32 | [8 8] | no | 92 | 99 | 17 |

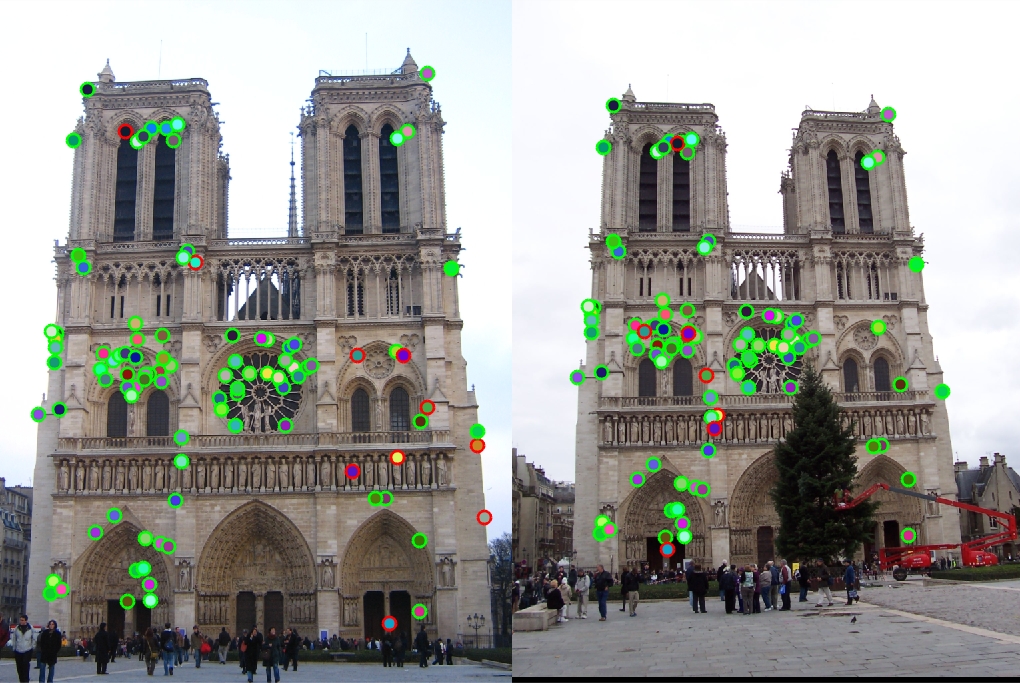

Notre Dame | 90 good matches, 10 bad matches

Notre Dame | 90 good matches, 10 bad matches

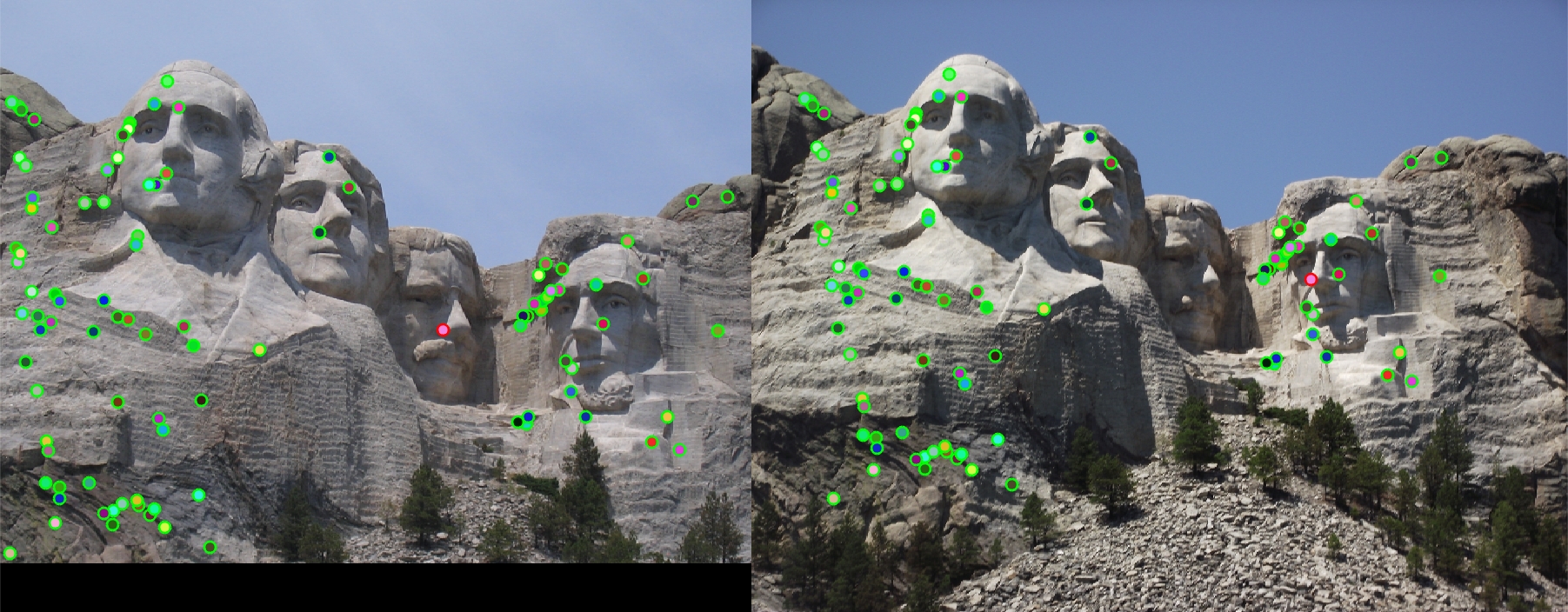

Mt. Rushmore | 99 good matches, 1 bad match

Mt. Rushmore | 99 good matches, 1 bad match

Episcopal Gaudi | 13 good matches, 87 bad matches

Episcopal Gaudi | 13 good matches, 87 bad matches

Capricho Gaudi | Majority of points seem to be matched correctly

Capricho Gaudi | Majority of points seem to be matched correctly

House | Almost all points seem to be matched correctly

House | Almost all points seem to be matched correctly

Pantheon Paris | Only a few points matched correctly

Pantheon Paris | Only a few points matched correctly

Sacre Coeur | Only a few points matched correctly

Sacre Coeur | Only a few points matched correctly

Statue of Liberty | A Fair number of points seem to have been matched correctly

Statue of Liberty | A Fair number of points seem to have been matched correctly