Project 2: Local Feature Matching

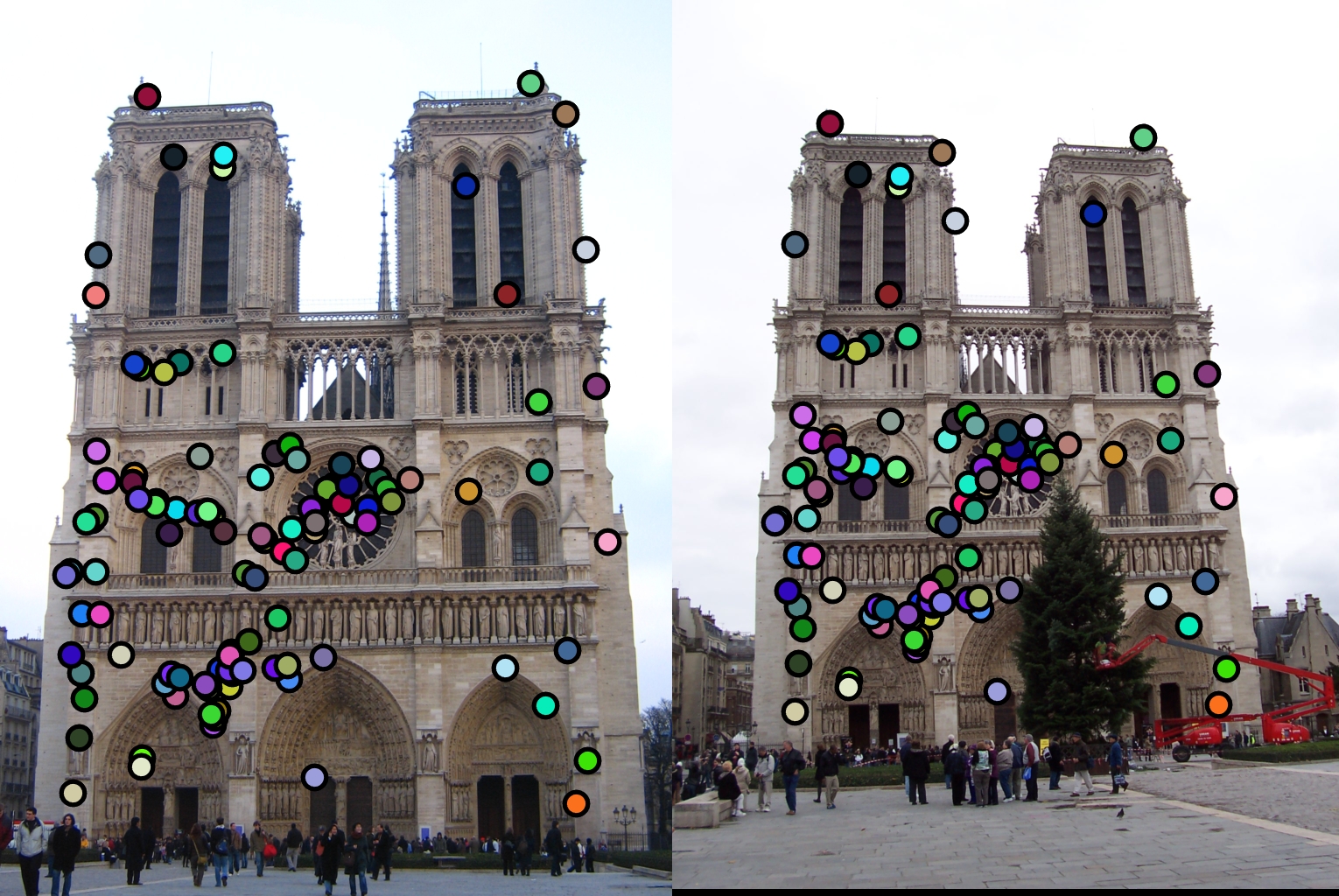

Picture of notre dame that we will do feature matching on

The goal of this assignment is to do local feature matching on instance level, with multiple views of a single scene.

These are the three parts of the assignment

- Implement a harris corner detector in get_interest_points.m

- Implement SIFT-like local feature in get_features.m

- Implement ratio test matching in match_features.m

Part 1

This is how I implemented the Harris corner detector in order to find the interest points. These are the following steps

This generated the following interest points as seen below.

Part 2

This part was on implementing feature descriptors

Part 3

This part was on implementing feature matching

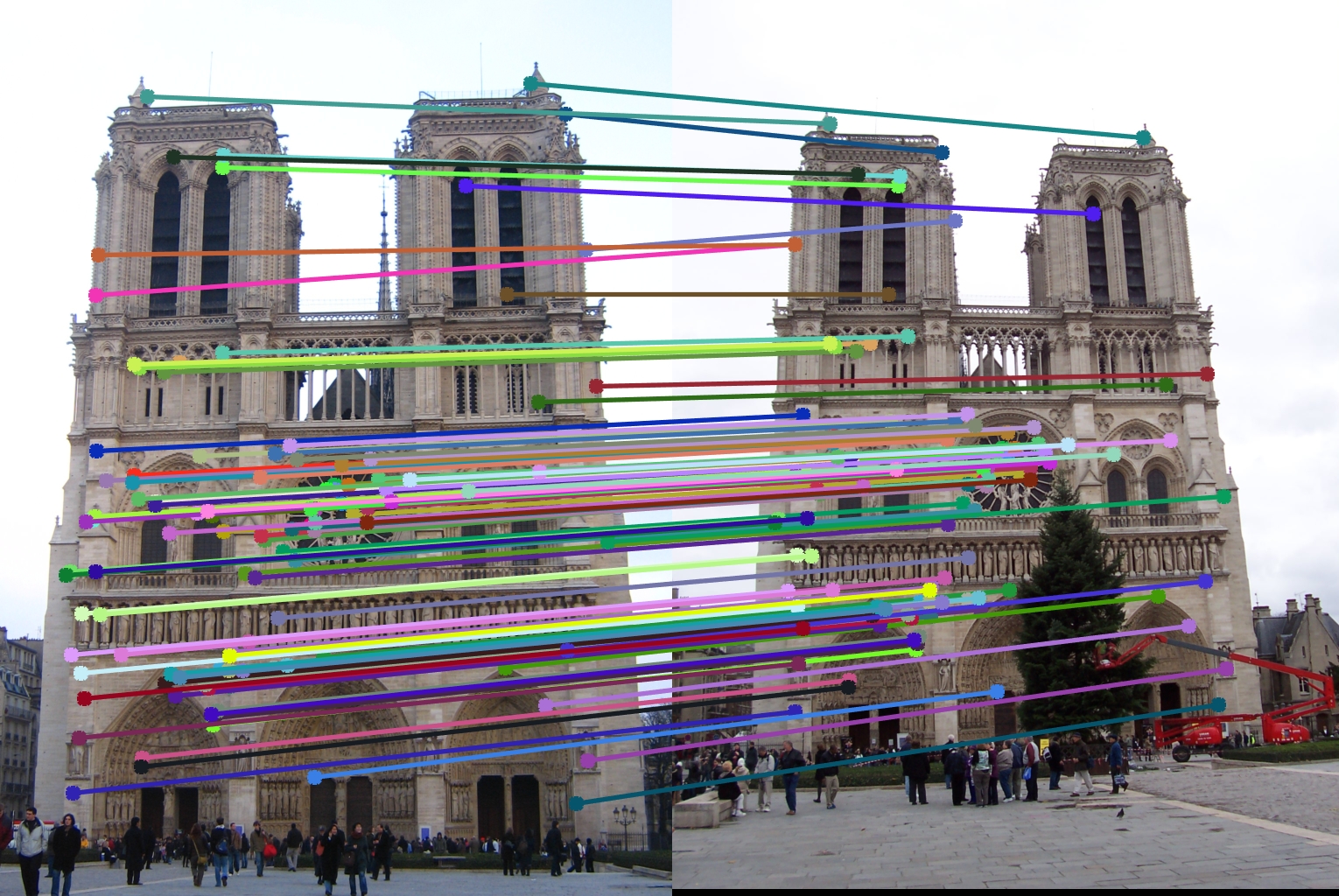

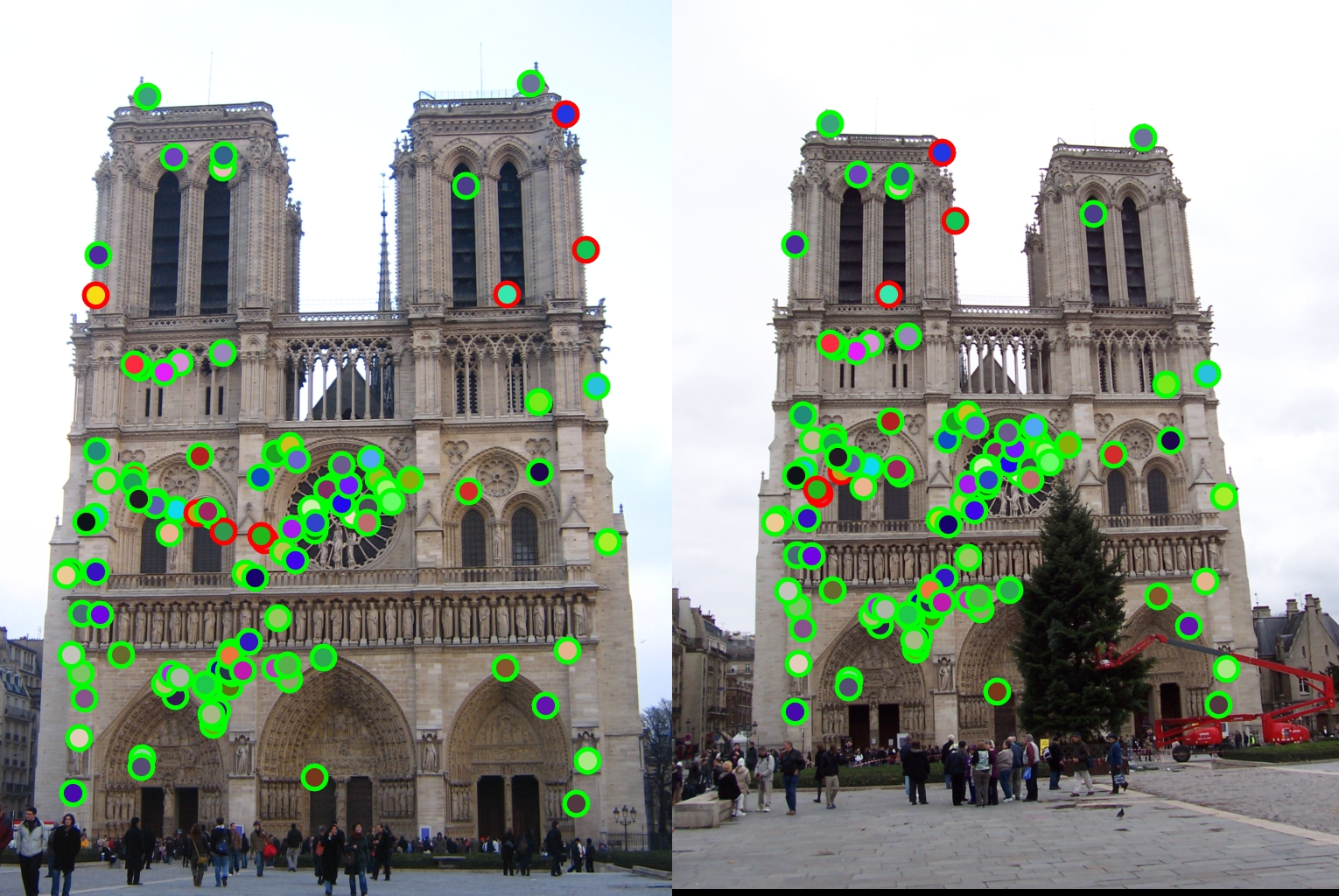

The results are as seen below

The final results for the notre dame image are as seen below. It had 102 total good matches, 8 total bad matches. 92.73% accuracy. It performed very well.

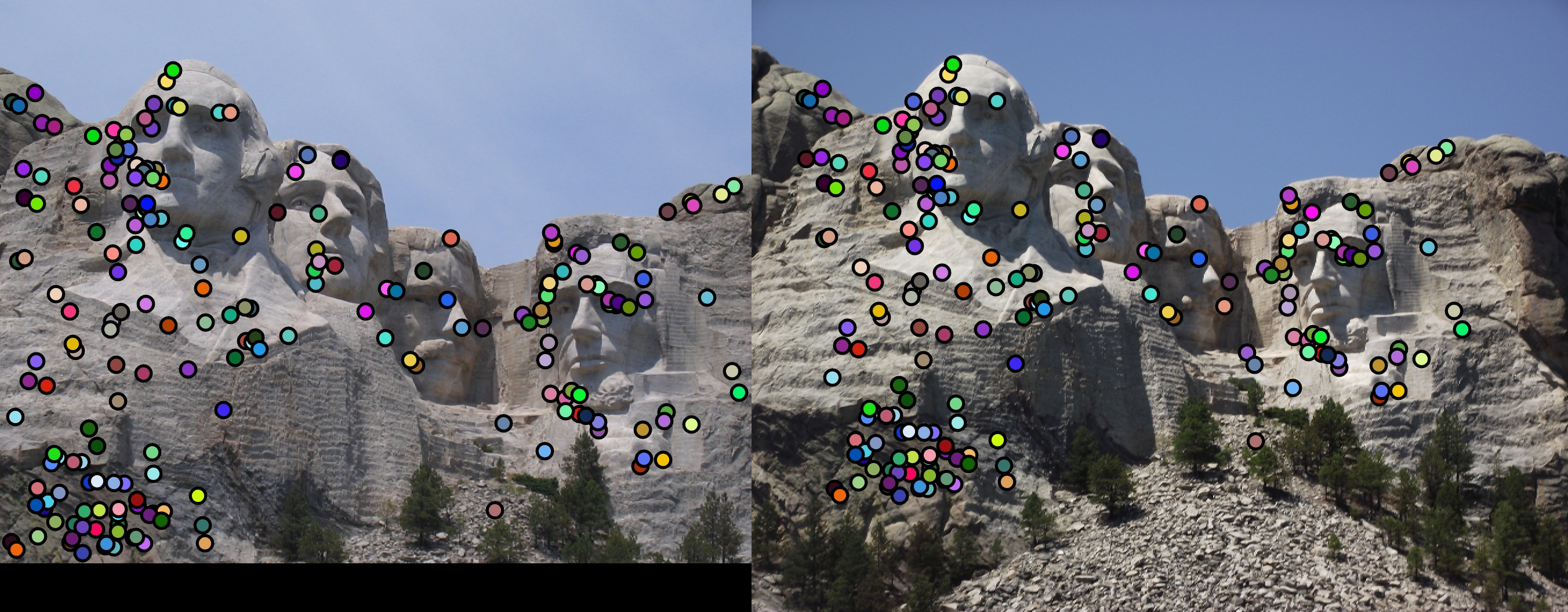

Other images

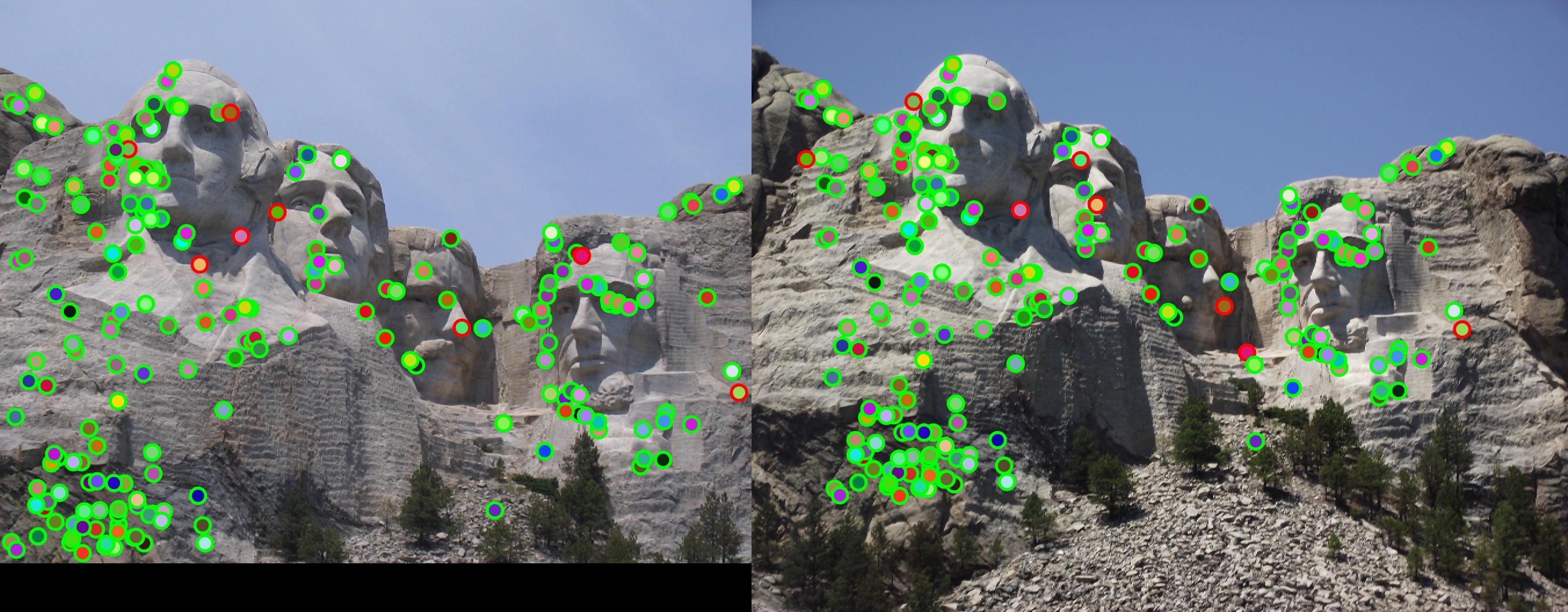

These are the results for the Mount Rushmore. Results were 199 total good matches, 8 total bad matches. 96.14% accuracy Accuracy and matches were fantastic and my algorithm worked very well on it, even better than the notre dame image.

These are the results for the Episcopal Gaudi. Results were 0 total good matches, 0 total bad matches. NaN% accuracy. My algorithm could not find any interest points or features at all. This was a rather difficult image.

Additions and tweaking of SIFT parameters

I stuck to the baseline implementation, except that I changed the alpha parameter and fount that when it was increased 0.04 to 0.05, the number of features found are fewer. However, when alpha is increased to 0.06, the number of matches decreased but accuracy increased by 2%.

Since the frame size is fixed, I also found that using images of similar sizes would help to increase accuracy.

Since the frame size is very small and only 16 by 16, the variance of the gaussian used to compute gradients would be better if it was small. I decreased the variance to improve performance.

The gradient being 0.25 also did not change much since most points were below 0.25