Proj. 3 - Camera Calibration and Fundamental Matrix Estimation with RANSAC

This project is on feature matching between a pair of images. The first part deals with camera calibration and calculation of the camera centre. Following this, the fundamental matrix estimation and RANSAC optimization are implemented, which are part of the feature point matching.

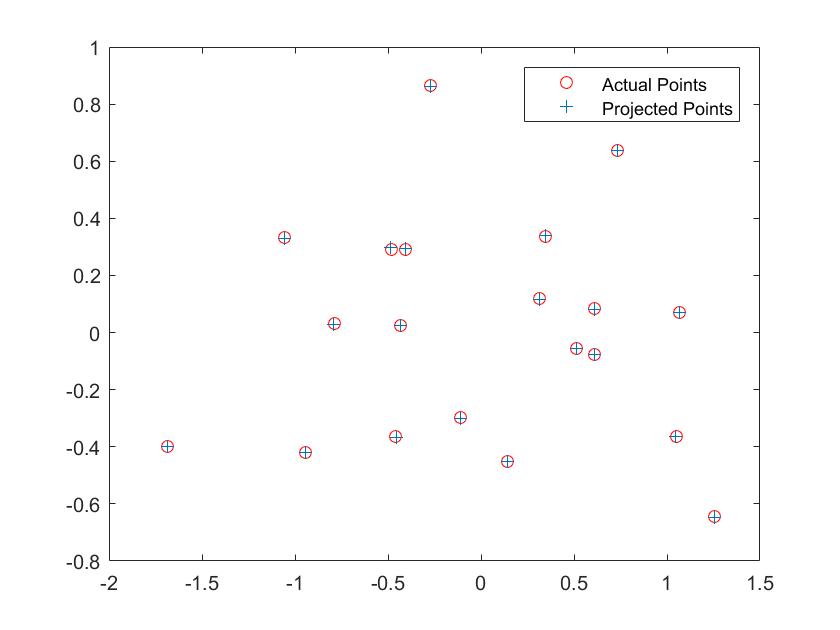

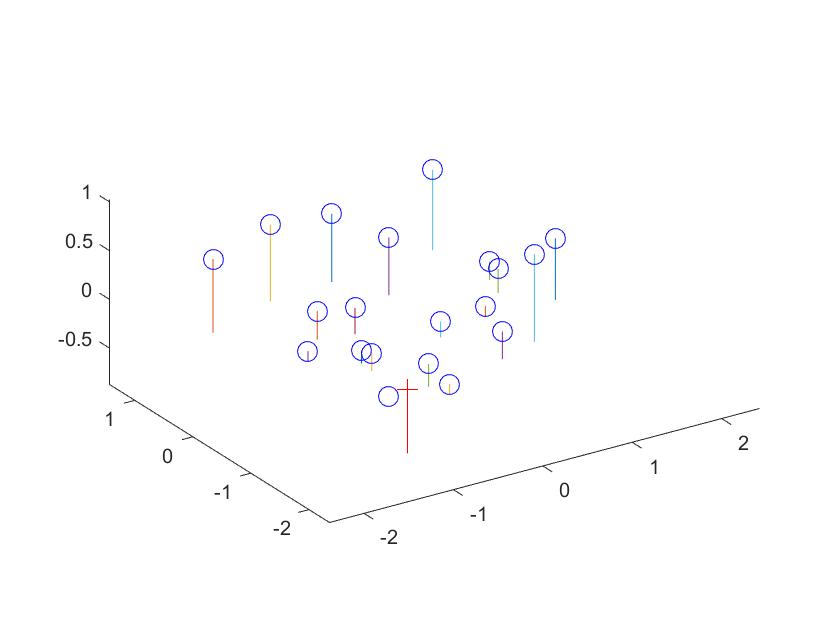

Estimation of Projection Matrix and Camera Centre

The projection matrix M is a 3x4 matrix that maps world 3D coordinates to 2D coordinates in the camera imaging plane. M can be solved using a minimum of 6 known 2D-3D matching correspondence points for the 12 unknowns. This function uses 20 sets of points to solve the non-homogenous form equations obtained by forcing the last element in M to 1.

Example of code

[M , ~] = size(Points_3D);

P = zeros(M*2, 11);

P(1:2:end) = [Points_3D ones(M, 1) zeros(M, 4) -Points_2D(:,1).*Points_3D(:,1) -Points_2D(:,1).*Points_3D(:,2) -Points_2D(:,1).*Points_3D(:,3)];

P(2:2:end) = [zeros(M, 4) Points_3D ones(M, 1) -Points_2D(:,2).*Points_3D(:,1) -Points_2D(:,2).*Points_3D(:,2) -Points_2D(:,2).*Points_3D(:,3)];

Q = reshape(Points_2D', M*2, 1);

M = P \ Q;

M = [M ; 1];

M = reshape(M, [], 3)';

%camera centre

q = M(:, 1:3);

m = M(:, 4);

Center = - inv(q) * m;

Result

The residue value obtained should be as small as possible and is found to be 0.0445 for the test images provided. The projection matrix obtained is shown below.

| M = | |||

| 0.7679 | -0.4938 | -0.0234 | 0.0067 |

| -0.0852 | -0.0915 | -0.9065 | -0.0878 |

| 0.1827 | 0.2988 | -0.0742 | 1.0000 |

The estimated location of camera is: <-1.5126, -2.3517, 0.2827>

|

Calculation of Fundamental Matrix

The fundamental matrix, of order 3x3, is used to map a feature point in one image to the corresponding epipolar line in the second image. By constraining the last element to 1 the system of equations is solved using 8 pairs of corresponding points.

SVD of matrix is obtained to get matrices U, S and V. The last column of V is gives the fundamental matrix when reshaped to a 3 x 3 matrix. The smallest Eigen Value is forced to zero to enforce rank 2.

Example of code

[n, ~] = size(Points_a);

w = [Points_a(:,1).*Points_b(:,1) Points_a(:,2).*Points_b(:,1) Points_b(:,1) Points_a(:,1).*Points_b(:,2) Points_a(:,2).*Points_b(:,2) Points_b(:,2) Points_a(:,1) Points_a(:,2) ones(n,1)];

[~, ~, V] = svd(w);

Q = V(:,9);

Q = reshape(Q',3,3);

[U, S, V] = svd(Q);

S(3,3) = 0;

F_matrix = (U*S*V')';

Results Obtained

The fundamental matrix obtained for the test images is:

| F = | ||

| -5.36264198382409e-07 | 7.90364770858070e-06 | -0.00188600204023563 |

| 8.83539184115792e-06 | 1.21321685010110e-06 | 0.0172332901014493 |

| -0.000907382264407756 | -0.0264234649922039 | 0.999500091906703 |

|

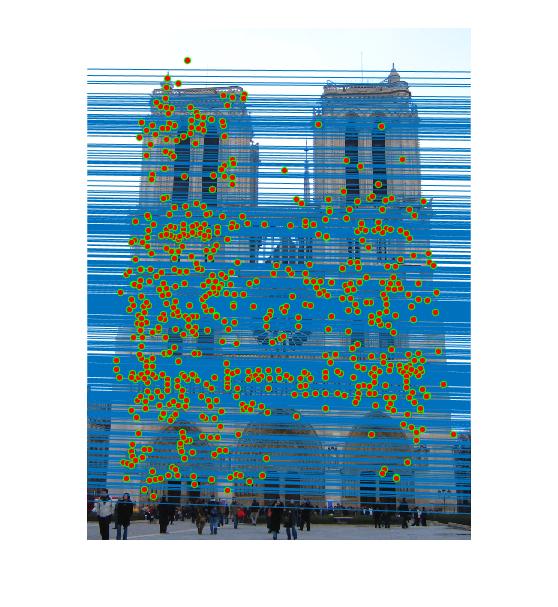

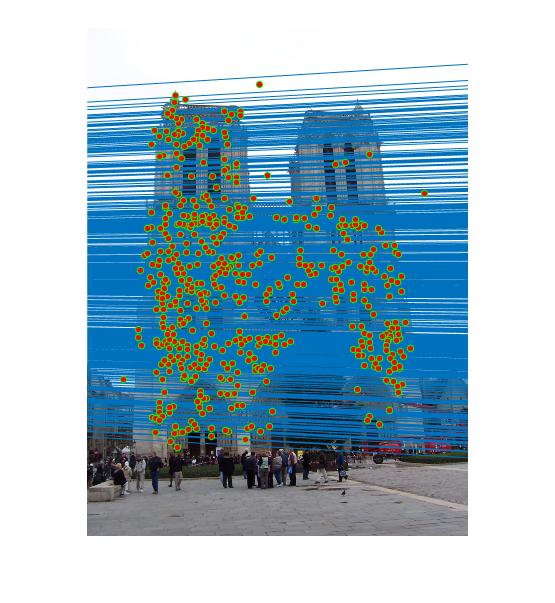

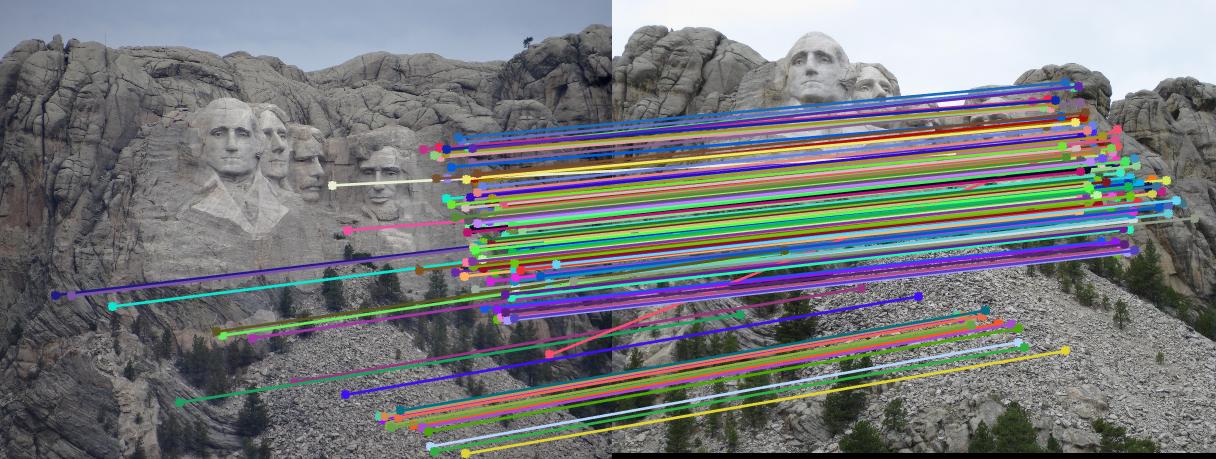

Best Fundamental Matrix using RANSAC

This best fundamental matrix that maps the matched points between the two images using RANSAC(RANdom SAmple Consensus). The SIFT algorithm is run to extract matching feature points between the two images and a subset of eight points is sampled at random and used to generate a fundamental matrix. The entire feature set is now multiplied by the fundamental matrix and the number of inliers calculated. This value is selected based on the probability of inliers from the entire set. The best fundamental matrix is selected as the one having maximum number of inlier points.

Example of code

%example code

for i = 1:3000

count = 0;

pts = randsample(size(matches_a,1), 8);

f1 = estimate_fundamental_matrix(matches_a(pts, :), matches_b(pts, :));

for i = 1:size(matches_a,1)

e = [matches_b(i,:) 1] * f1 * [matches_a(i,:) 1]';

if abs(e) < 0.05

count = count + 1;

end

end

if count > max_in

max_in = count;

Best_Fmatrix = f1;

end

end

Temp = zeros(size(matches_a,1),1);

for i = 1:size(matches_a,1)

Temp(i) = [matches_b(i,:) 1] * Best_Fmatrix * [matches_a(i,:) 1]';

end

[~ , k] = sort(abs(Temp), 'ascend');

inliers_a = matches_a(k(1:max_in), :);

inliers_b = matches_b(k(1:max_in), :);

Results

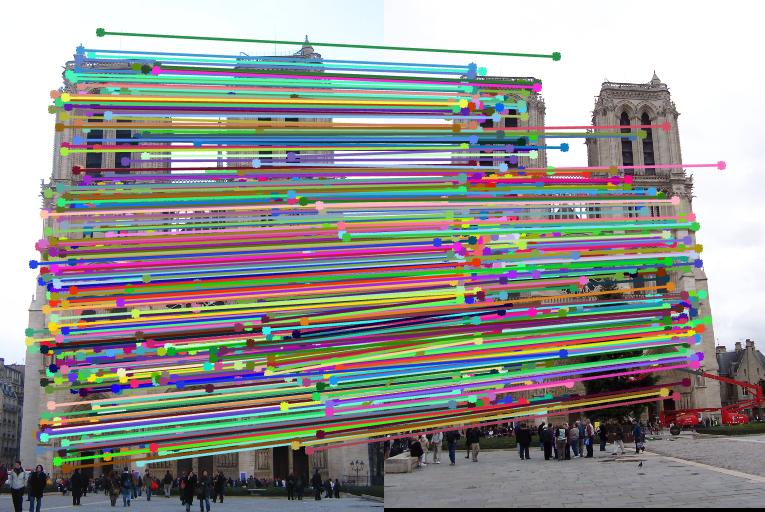

Notre Dame

|

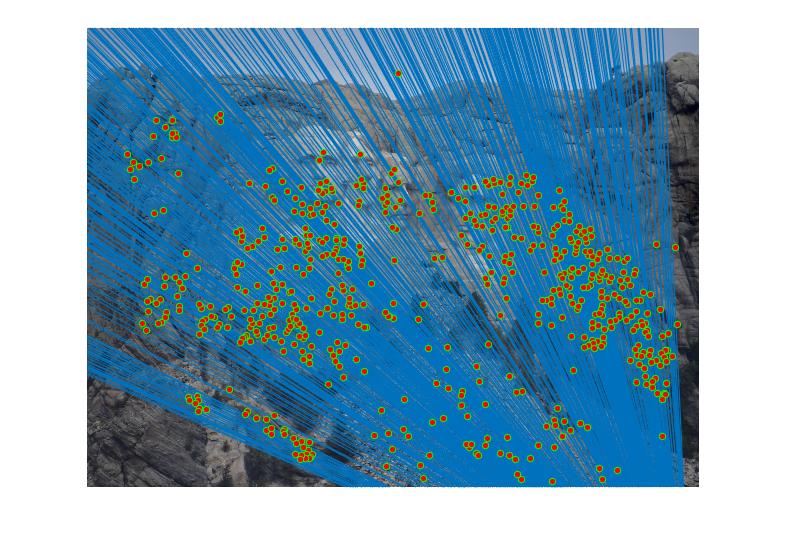

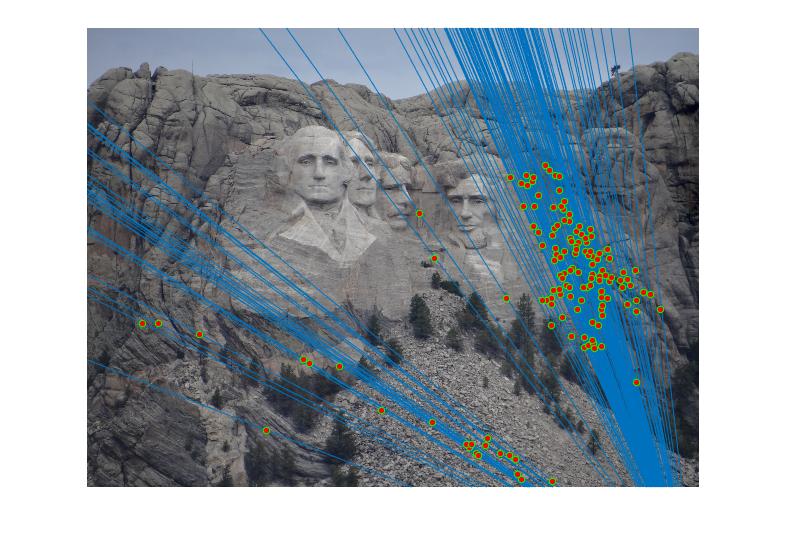

Mount Rushmore with threshold value 0.05

|

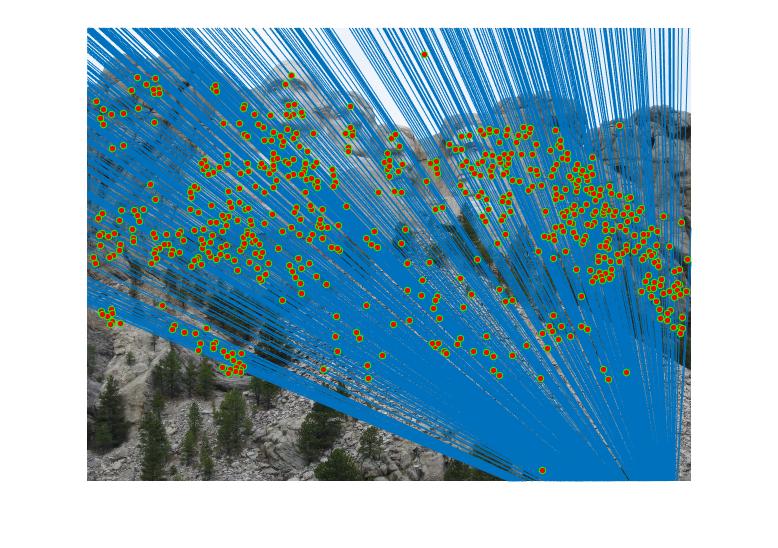

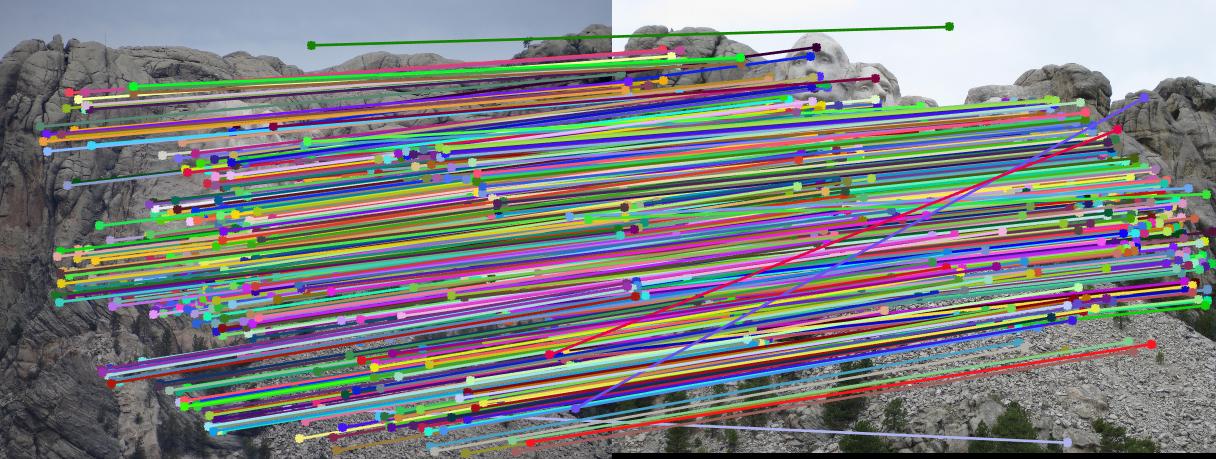

Mount Rushmore with threshold value 0.001

|