Project 5 / Face Detection with a Sliding Window

This project is about implementing a sliding window detector of Dalal-Triggs to independently classify all image patches as being face or non-face. To do so, we use a SIFT-like Histogram of Gradients (HoG) representation and classify millions of sliding windows at multiple scales.

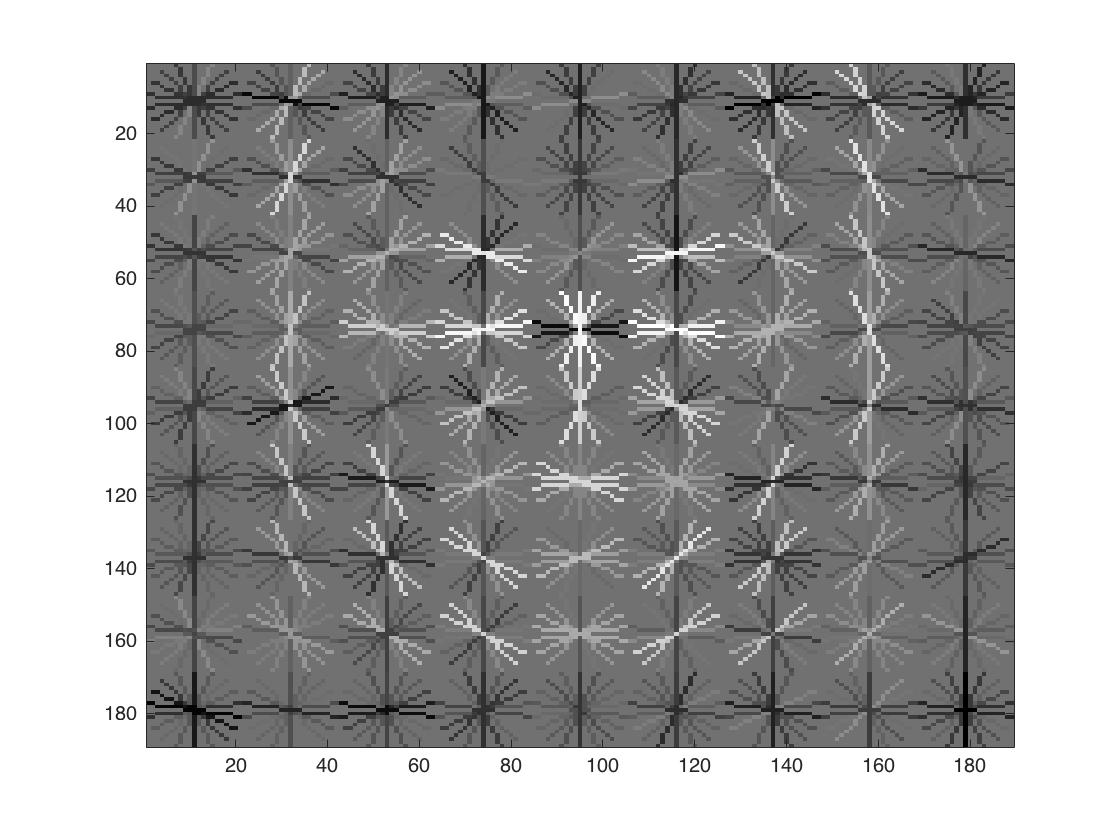

Visualization of HoG features

Performance Comparison

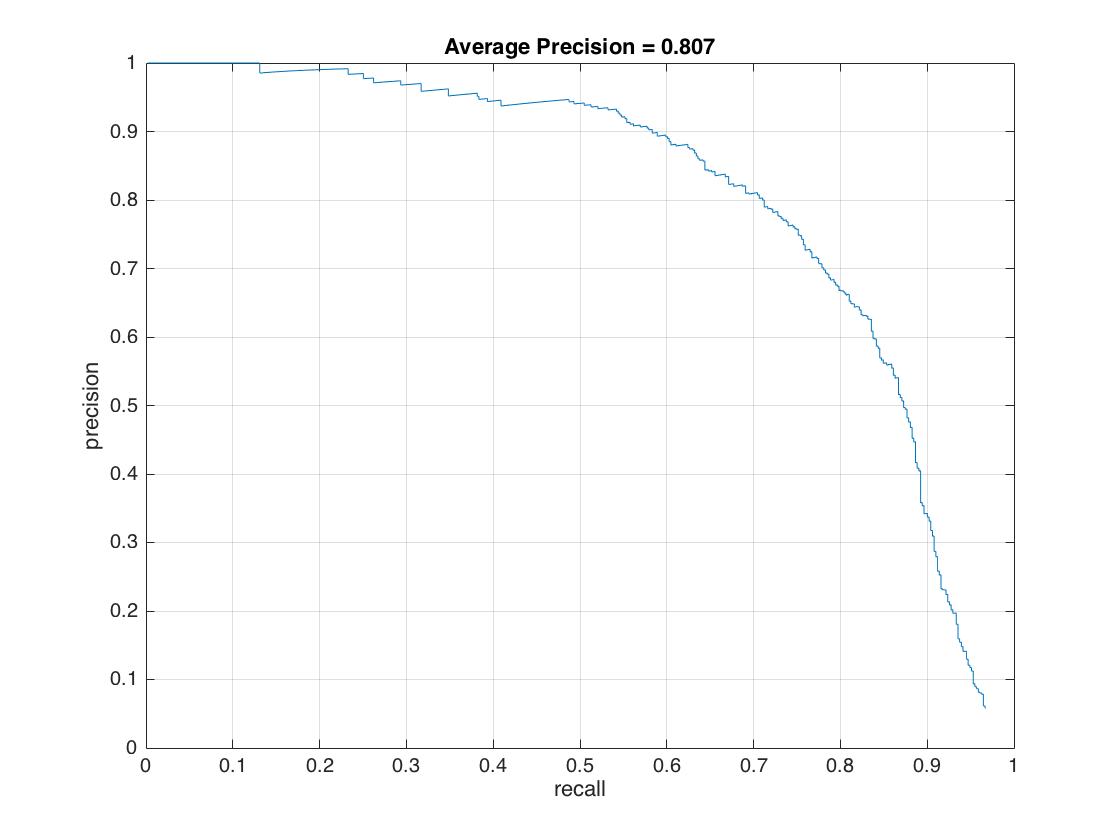

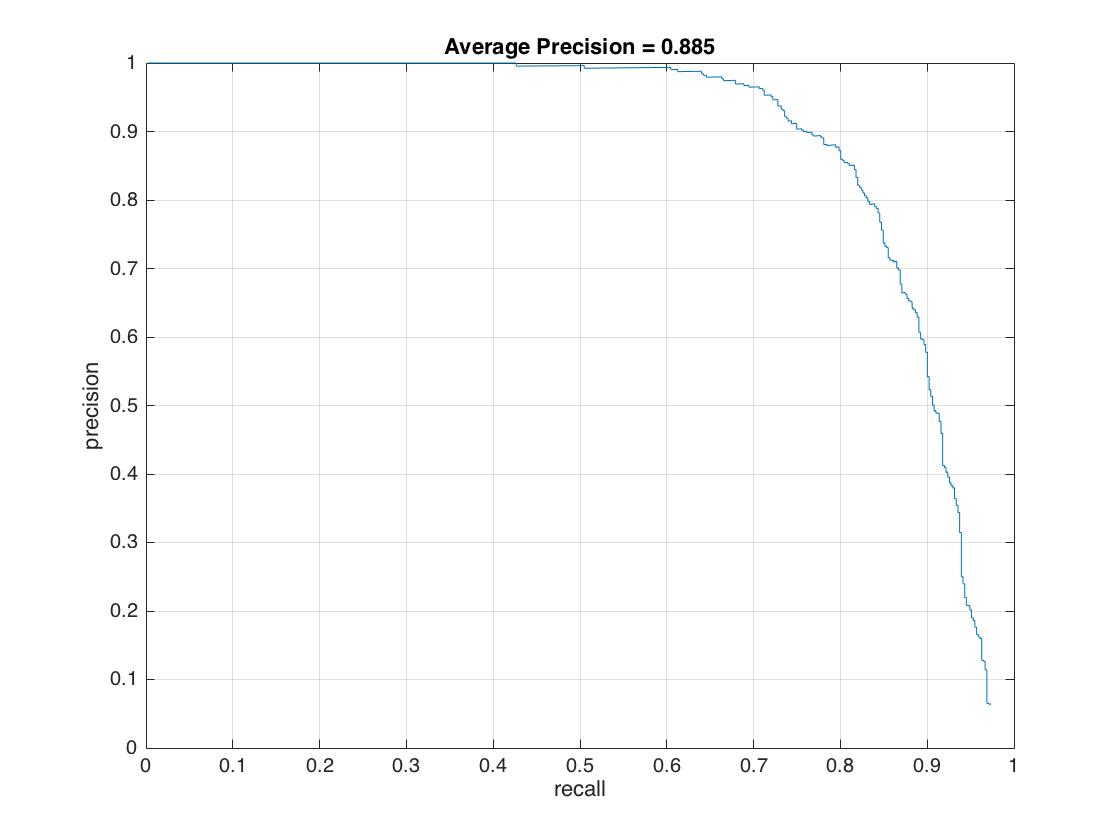

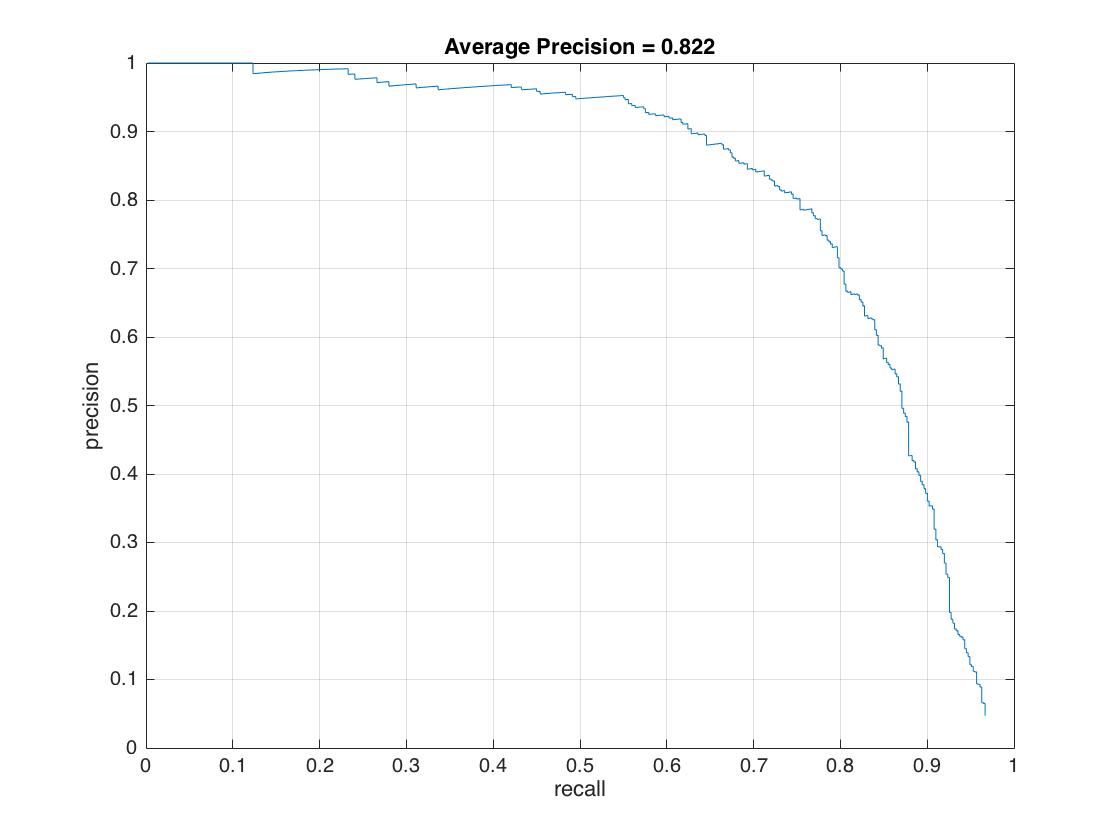

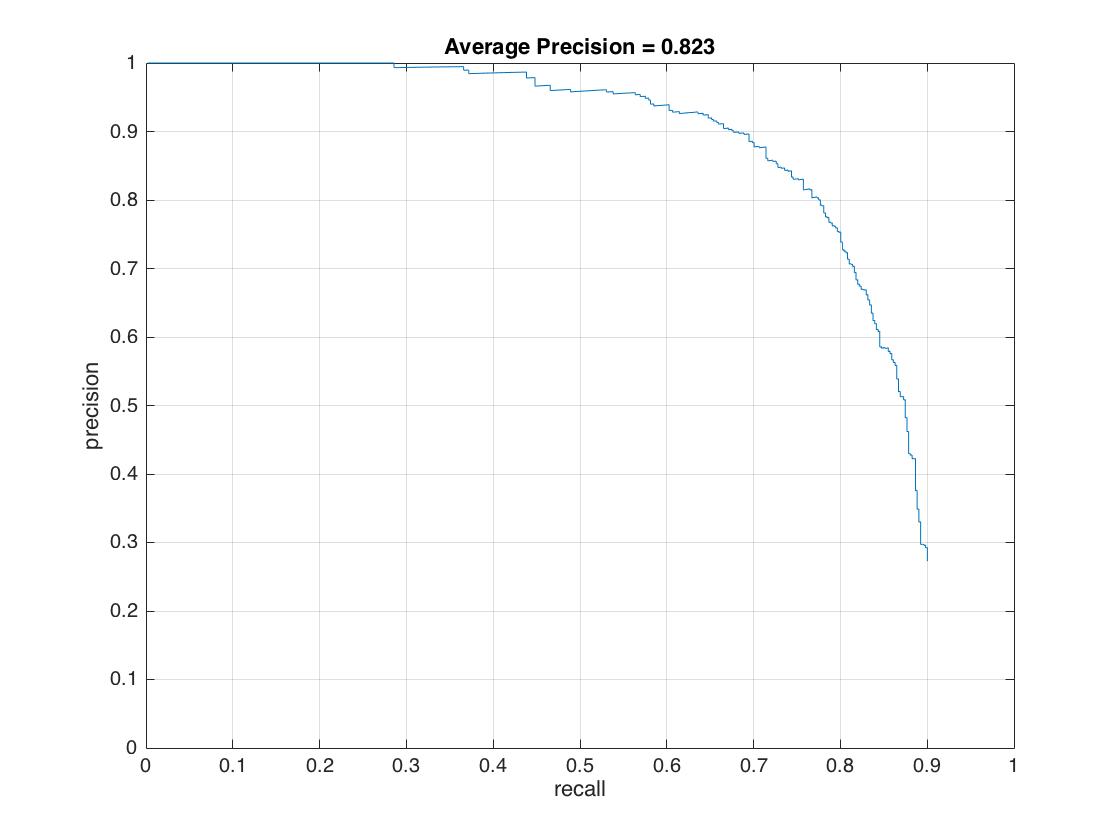

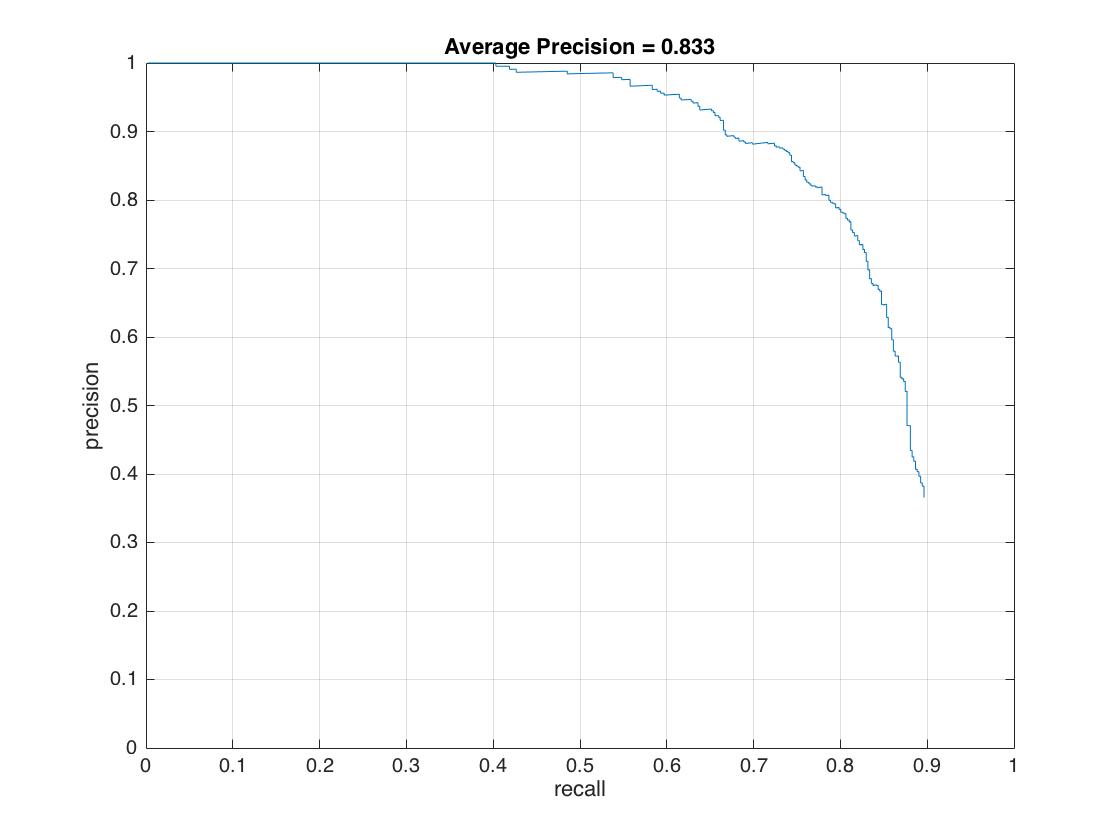

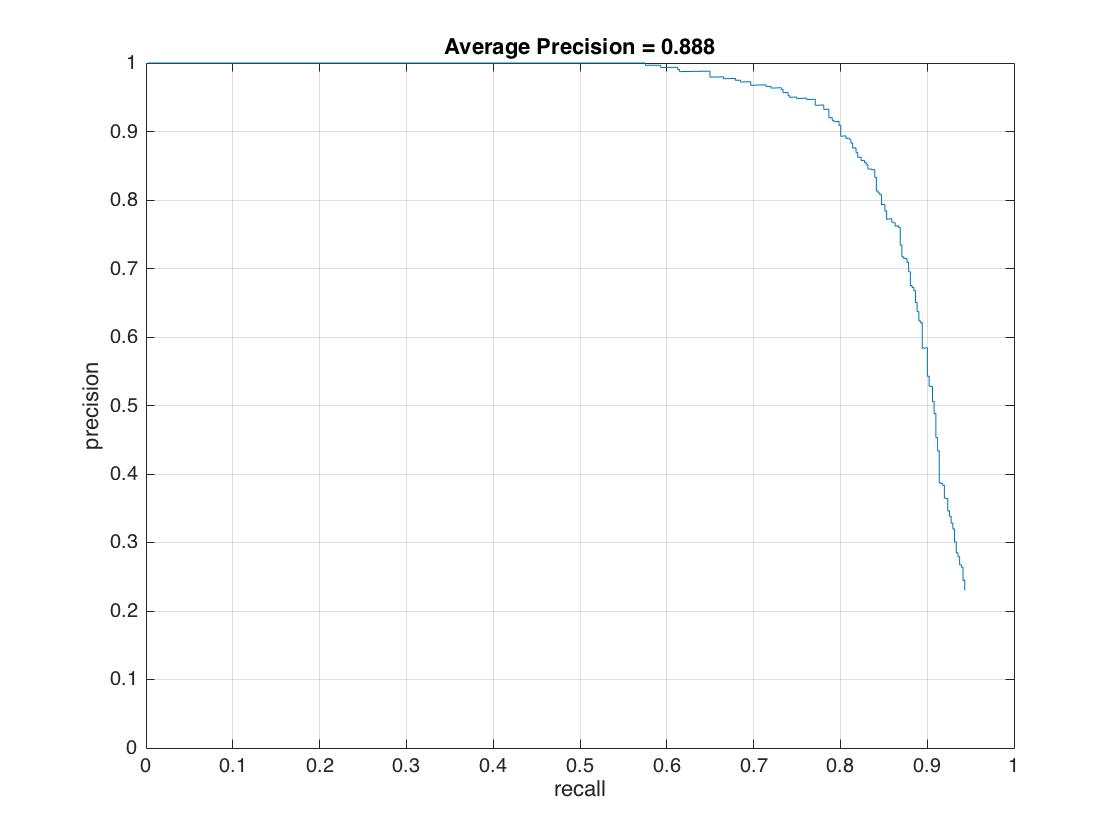

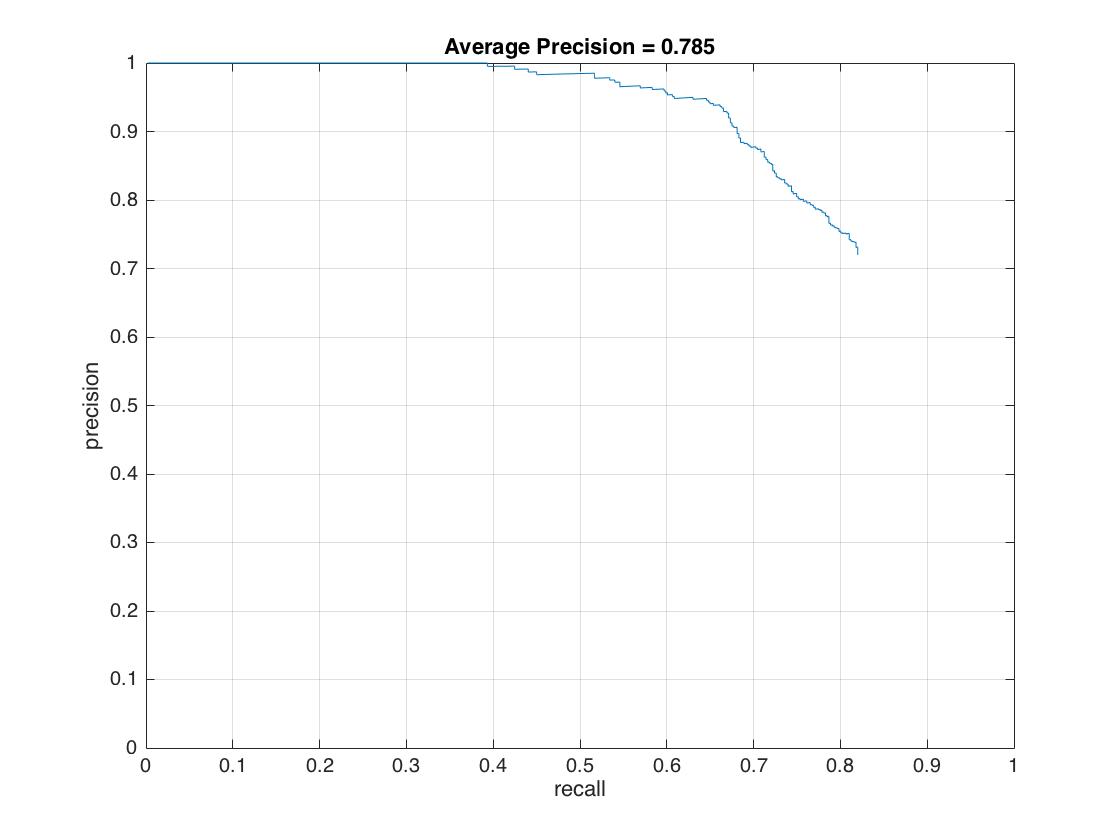

Below is the comparison of performances with different parameters, including single/multiple scales, cell size, with/without hard negative features, confidence level, etc.

| Scale | Cell Size | With Hard Negative Features | Number of Negative Example | Confidence Level | Average Precision |

|---|---|---|---|---|---|

| Single | 6 | No | 10000 | -0.9 | 60.4% |

| Multiple | 6 | No | 10000 | -0.9 | 80.7% |

| Multiple | 4 | No | 10000 | -0.9 | 88.5% |

| Multiple | 6 | No | 5000 | -0.9 | 82.2% |

| Multiple | 6 | Yes | 5000 | -0.9 | 82.3% |

| Multiple | 6 | Yes | 10000 | -0.9 | 83.3% |

| Multiple | 4 | Yes | 10000 | -0.9 | 88.8% |

| Multiple | 6 | Yes | 10000 | -0.1 | 78.5% |

And here are the average precision curves.

|

|

Analysis

Multiple scales, cell size and confidence level are the three major contributors to accuracy improvement. The scales I choose range from 1.0000 to 0.1176 with a down-sampled value of 0.7, which prompts AP from 60.4% to 80.7%. A smaller cell size increases AP by 8% and higher confidence level cuts down AP by 5% while does return less false positive. There is no significant difference when adding hard negative mining to classifier.