Speech reading has been subject of much research due to its obvious benefits in language interpretation by machines. In our project, we intend to investigate the coupling between audio and visual information to assist in interpreting what a person is saying.

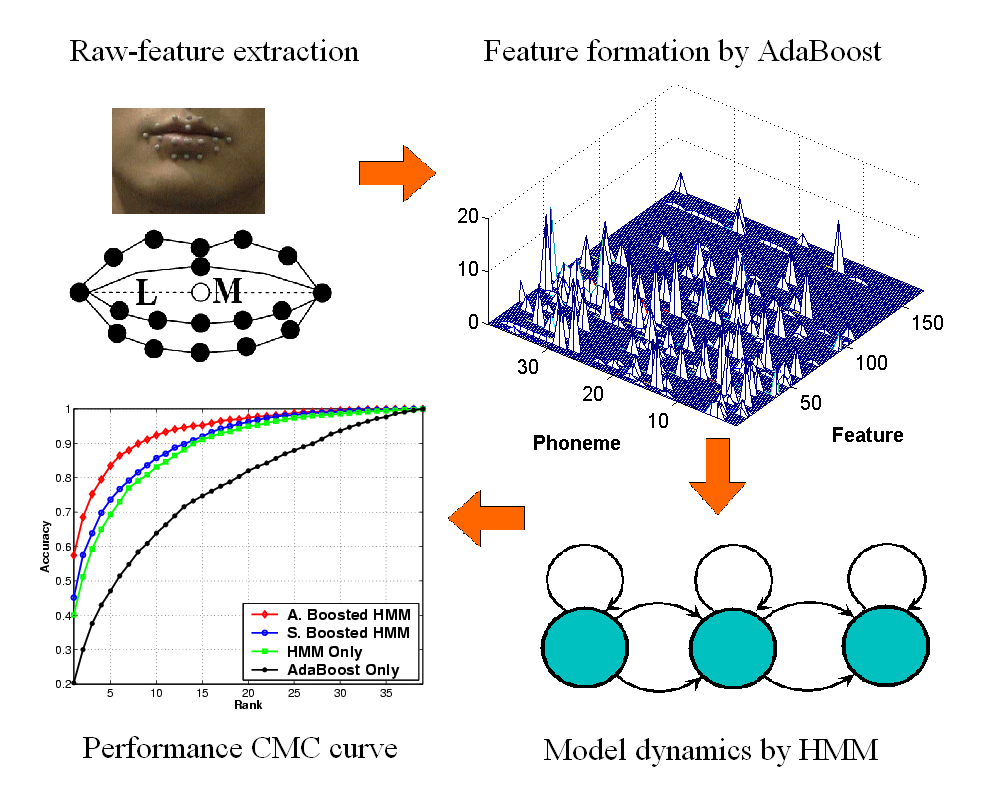

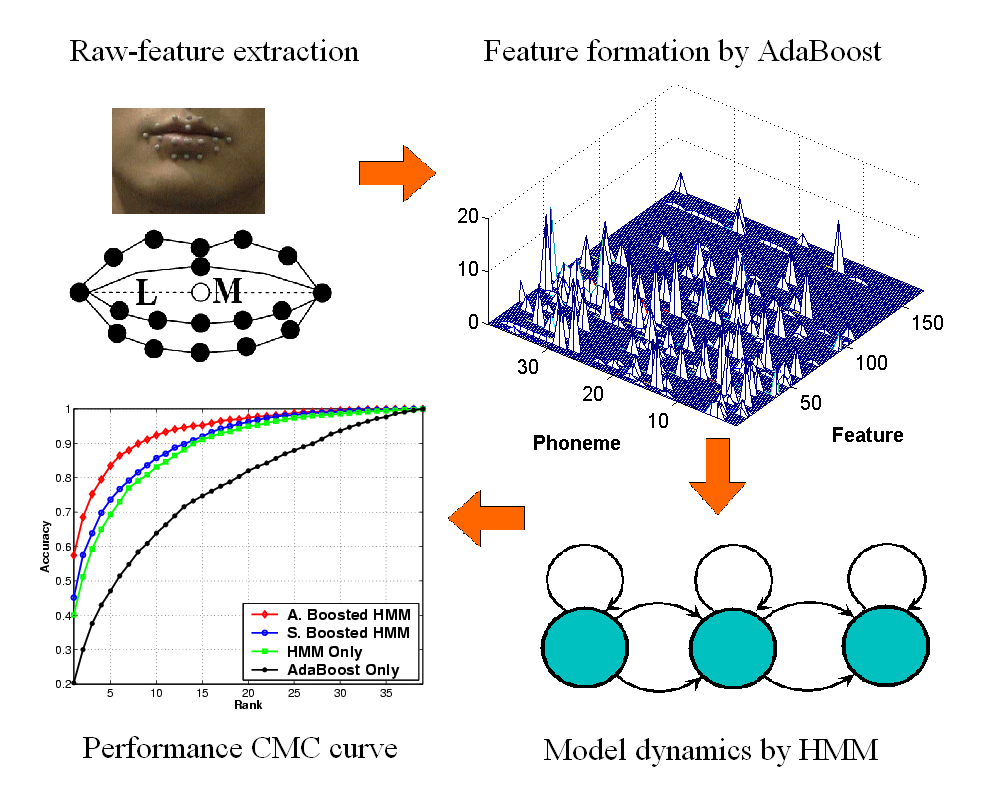

We propose a new approach for combining acoustic and visual measurements to aid in recognizing lip shapes of a person speaking. Our method relies on computing the maximum likelihoods of (a) HMM used to model phonemes from the acoustic signal, and (b) HMM used to model visual features motions from video. One significant addition in this work is the dynamic analysis with features selected by AdaBoost, on the basis of their discriminant ability. This form of integration, leading to boosted HMM, permits AdaBoost to find the best features first, and then uses HMM to exploit dynamic information inherent in the signal. Another contribution is the design of a new variant of AdaBoost M2 algorithm to address the internal asymmetry of the multi-class classification problem.

Compressed file (84MB): gtsr.rar

Pei Yin, Irfan Essa, James M. Rehg, "Asymmetrically Boosted HMM for Speech Reading". in Proc. IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2004), pp II755-761, Jun. 2004 (pdf) (bibtex)

Pei Yin, Irfan Essa, James M. Rehg, "Boosted Audio-Visual HMM for Speech Reading". in Proc. IEEE International Workshop on Analysis and Modeling of Faces and Gestures (AMFG), pp 68-73, Oct. 2003/held in conjunction with ICCV-2003. A version of this paper also appears in Proc. Asilomar Conference on Signals, Systems, and Computers, pp 2013-2018, Nov. 2003 as invited paper. (abstract) (bibtex)

|

Copyright © 1997-2003 |

Last Updated Auguest 16, 2003.