School of Computer Science Cybersecurity Researchers Are Securing the Future

Cybersecurity affects everything from policy to technology. Georgia Tech is at the forefront of many of these conversations on attribution and government responsibility. None of these discussions would be possible, though, without secure systems the public can rely on. In the School of Computer Science, we identify emerging threats and create these systems to defend against them.

New attacks

Keeping a pulse on the latest attack methods is one of our strengths. In order to create a more secure world, we must imagine threats outside of traditional devices. Yet the depth of our expertise ensures we also stay on top traditional methods. By tracking all attacks, we can build the necessary solutions.

Internet of things (IoT) devices let us control the temperature of our home or track the food in our fridge from afar, but they are often built on simple software stacks. “All of these things were never meant to be connected to anything, so there is no notion of security when the code was designed and that creates a lot of vulnerabilities,” Professor Milos Prvulovic said.

One such vulnerability is side channels, the electromagnetic fields the device deploys when computation runs. An attacker can monitor these side channels to determine how a program runs down to when the program deploys a cryptokey and steal it. Yet Prvulovic surveys these side channels, too, determining whether a device is running expected programs and then updating it with more secure software. Prvulovic is applying the same technique to hardware. He measures radio frequency signals to determine how much power is going through a chip to ensure that chips operate as they should.

Many attackers still rely on classic phishing, but are trying more sophisticated techniques to monetize successful attacks. For example, the notorious tech support scammers use search poisoning and malicious advertisements to get victims to call them. These attacks employ both online and phone channels or cross-channels. Professor Mustaque Ahamad researches how to understand and defend against cross-channel attacks. Securing voice channels is a growing cybersecurity field as voice-controlled IoT devices become common.

New defenses

Much of our work is preventative. We secure data users already have. The first step in data protection is authenticating users, and biometrics are the best tool. “It’s based on who you are, not what you know,” said Professor Wenke Lee. This real-time Captcha offers new ways to authenticate users, but still has shortcomings. Lee is working on how to preserve the privacy of biometrics so that if a server were compromised, an attacker couldn’t use the stolen data to impersonate users.

Securing the cloud is the next frontier. Associate Professor Alexandra Boldyreva works on searchable encryption. Users would be able to securely store data remotely on the cloud and perform searches. She also analyzes the cryptographic security guarantees of the newest networking and web authentication protocols. Associate Professor Vladimir Kolesnikov studies secure computation. This form of cryptography protects users’ data during computation, so they can securely share healthcare data or private blockchain transactions. With the latter, users can verify they have enough money in their wallets for transactions without having to disclose the exact amount.

New advances

We combine our attack forecasting and prevention measures in our research labs. Our malware analysis lab offers a secure environment to run malware. Analysts can collaboratively explore and enumerate malware behaviors, which is a much more efficient effort than the current industry practice of reverse engineering malware in silos.

We are securing machine learning (ML) with a similar approach. Hackers can introduce dirty training data to corrupt an ML model or morph malware to evade an ML model. To combat adversarial ML, Lee’s team measures how robust a model is. They are creating an open source framework for researchers to test their models’ strengths and improve their robustness.

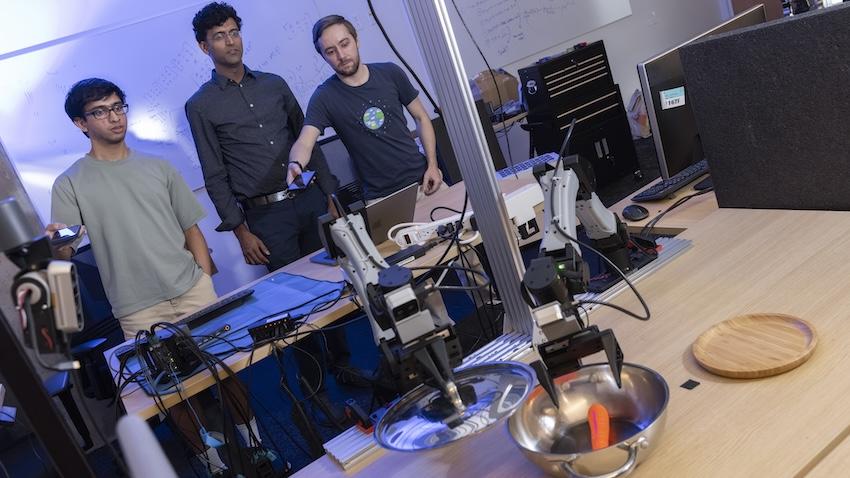

Not all of this automation is bad. Assistant Professor Taesoo Kim sees the future of cybersecurity “as autonomous systems that assist human security experts handling large-scale security problems with small number of experts.” With this in mind, he’s been working on automated techniques to find bugs and cyber reasoning systems that create autonomous attack and defenses.

Our efforts may be wide-ranging, but we are consistently creating the future of cybersecurity. Our cybersecurity researchers patch vulnerabilities in devices many didn’t even realize attackers compromised and ensure data is secure in our ever-connected world. Trust is a hard-won concept in the cybersecurity field, but our systems make people more confident their data is safe.