Researchers Envision the Future of User Interfaces in Road Vehicles

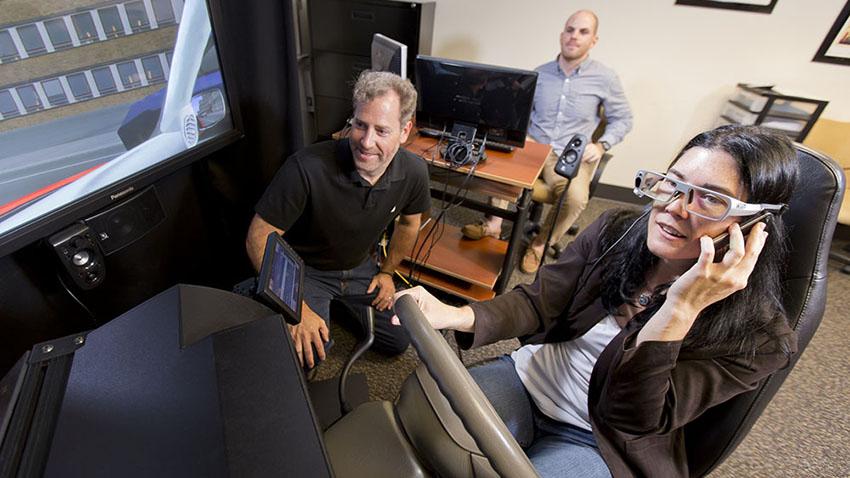

Georgia Tech is home to one of the leading research groups for user interfaces for on-road vehicles. The Sonification Lab’s driving and in-vehicle technologies have been a pillar of the group for more than a decade, and with the advent of electric and autonomous vehicles, the researchers are addressing new opportunities and challenges.

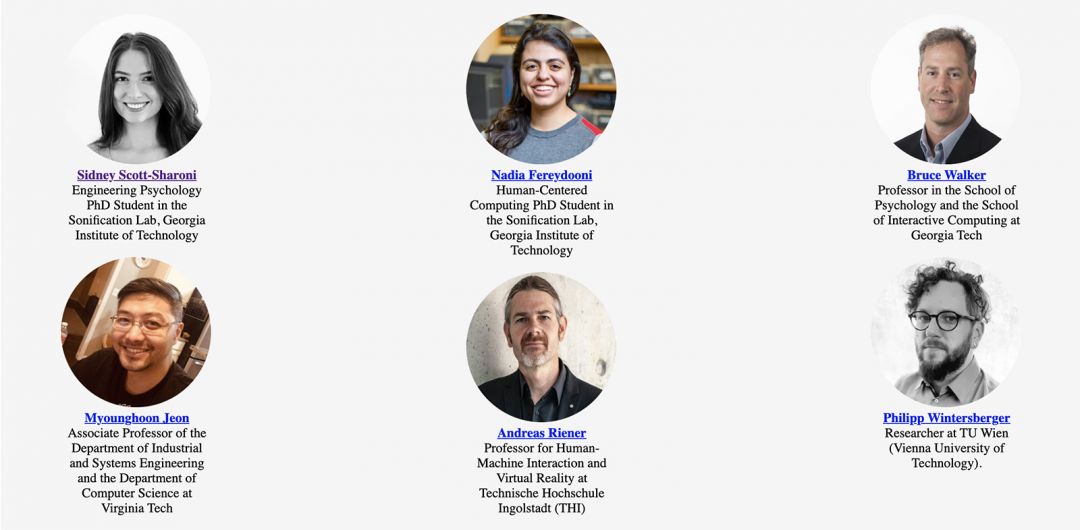

At the 13th International ACM Conference on Automotive User Interfaces and Interactive Vehicular Applications (AUTO-UI), Sept. 9-10 and Sept. 13-14, Georgia Tech researchers are co-leading the workshop To Customize or Not to Customize: Is That the Question?, focused on how user needs can be met when interacting with autonomous vehicles.

The workshop will explore defining the role of customization in UI, its range, and dissecting its taxonomy to build a more recognizable and comprehensive model for engineers and researchers.

“Participants will discuss what features of automotive UI's can be standardized and what should be customized, and work towards creating a taxonomy,” said Sidney Scott-Sharoni, Ph.D. student in Engineering Psychology and workshop co-organizer.

Workshop Organizers

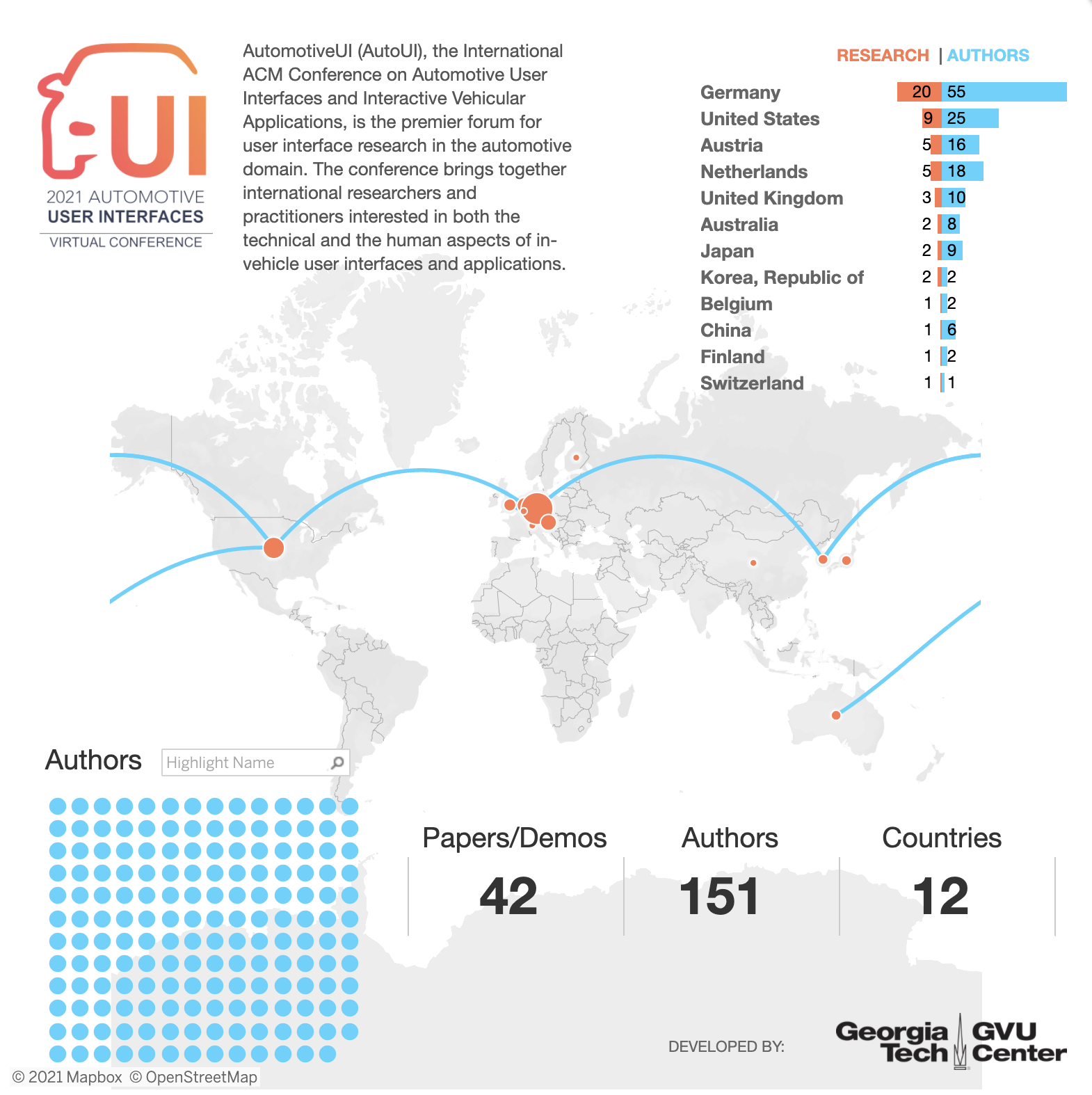

AUTO-UI’s main program includes 151 researchers from 12 countries with work in the technical program. Germany leads with the most accepted papers and demos as well as contributing authors. Germany and the United States combined contribute to 69 percent of the main program.

Georgia Tech alum Myounghoon "Philart" Jeon (MS and Ph.D. in Engineering Psychology) of Virginia Tech is a co-author on five papers and demos, the most for any single author at AUTO-UI 2021.

Bruce Walker, the director of the Sonification Lab and professor in the School of Psychology and School of Interactive Computing, said his lab approaches in-vehicle technology research with many users in mind.

“Modern vehicles include many secondary 'non-driving related tasks,' ranging from adjusting the entertainment system to navigation, as well as occupants, unfortunately, checking their mobile devices,” said Walker. “While it is generally safest not to do secondary tasks while driving, carefully designed auditory user interfaces and accessories with multimodel displays can allow safer completion of many of these tasks.

“Multimodal displays also can serve as assistive technologies to help novice, tired, or angry drivers perform better, as well as helping drivers with special challenges, such as those who have had a traumatic brain injury or other disability.”

As autonomous vehicles become more common on the roads, there will be an even greater opportunity to let the car drive itself, allowing the occupants to sit back and focus on even more engaging non-driving tasks like reading a book, watching a movie, or even using a virtual reality system. Even still, passengers will need to keep an eye (or ear) on what's happening around the vehicle. Walker's research aims to support this advanced "situation awareness."

"It requires great trust in the technology to watch a movie while letting your car take care of the driving," Walker explained. "Each person expects and desires different behaviors from their automated vehicle. Our research into multimodal user interfaces, and this workshop at AUTO-UI on automation customization, is pushing towards this future where we can take back our commute."

Toyota is currently sponsoring the lab on research in the automotive UI space. Learn more about the Sonfication Lab at http://sonify.psych.gatech.edu/.